Does Sharing a ChatGPT Conversation Link Mean Waiving Your Privacy?

(By Wang Ting) In late July 2025, the “Share” feature of OpenAI’s ChatGPT triggered a global privacy controversy. Conversation links generated by users were indexed by search engines such as Google, leading to the large-scale exposure of sensitive information, including medical records and trade secrets. Although ChatGPT promptly removed the option that allowed shared conversations to be publicly searchable, debate has continued over whether clicking “Share” should be deemed a voluntary waiver of privacy.

At the heart of the controversy lies a fundamental question: when users click the “Share” button, do they genuinely intend to send the content to specific recipients, or do they unknowingly make it accessible to the entire internet? More importantly, does the interface design satisfy the legal standard of “informed consent”? Following will explore how the issue would be analyzed under Chinese laws if a similar scenario occurred in China.

1. Overview

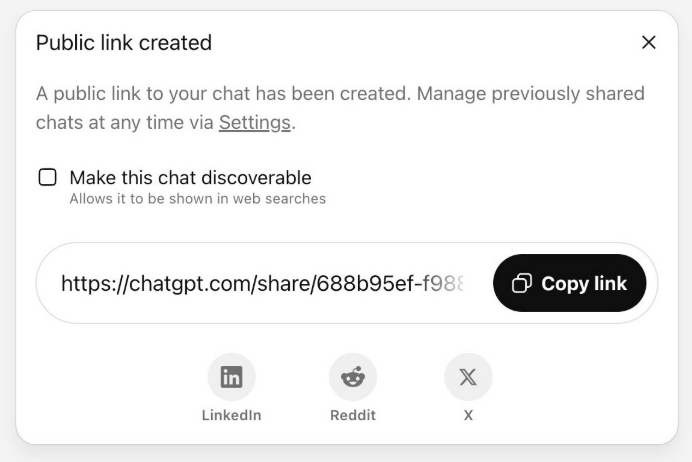

The controversy centers on ChatGPT’s “Share” feature. When a user clicks “Share” to generate a conversation link, a checkbox labeled “Make this chat discoverable” will be provided, accompanied by grey, small-font text stating “Allows it to be shown in web searches”. If the user selects this option, the conversation will be converted into a publicly accessible webpage that can be indexed by search engines and retained online indefinitely.

2. Legal Analysis: Does a “Public Link” Equal to a “Waiver of Privacy”?

(1) Does Sharing a Link Constitute “Voluntary Disclosure”?

Many people assume that “once the users click the “Share” button, the responsibility naturally lies with them”. However, Article 14 of China’s Personal Information Protection Law (“PIPL”) provides that the processing of personal information must follow the principles of legality, legitimacy, and necessity, and must obtain the individual’s informed and explicit consent. The key issue is whether users clearly and unambiguously understand that “sharing a link” may cause the conversation to appear in search engine results. The design of ChatGPT’s feature creates a significant cognitive gap precisely at this point.

First, ordinary users typically perceive “sharing” as sending information to specific recipients, rather than making it accessible to the entire internet. The platform merely uses light grey, small-font text to mark “Allows it to be shown in web searches” without prominently highlighting the public scope of the shared link and the potential risks involved, resulting in a substantial discrepancy between users’ expectation and the actual consequence.

Second, generating a link involves a three-step process: “click Share → select the option → confirm publication”. However, the interface design and workflow may easily lead users to believe that completing only the first two steps is sufficient to generate a private link. This mismatch between the multi-step process and the user intuition breaks the chain of consent: users believe they are creating a link visible only to specific recipients, while in reality they may have agreed to make the content publicly accessible.

In summary, through ambiguous prompts and misleading interaction design, ChatGPT creates a fundamental discrepancy between users’ understanding of “sharing” (sending information to specific recipients) and its actual consequence (public disclosure on the internet), causing failure to obtain “consent” from the users. Accordingly, clicking the “Share” button cannot be regarded as a genuine expression of intent based on a clear and unambiguous understanding of the consequences, and therefore should not be considered “voluntary disclosure”.

(2) Did the Platform Fulfil Its Data Protection Obligations?

This incident also highlights differences in privacy regulation across jurisdictions. The United States generally prioritizes innovation and commercial development, adopting a relatively flexible regulatory approach and relying more on ex-post market oversight and platform self-regulation. While this environment supports the rapid development of generative AI such as ChatGPT, it also increases the risk of data misuse. By contrast, the European Union, guided by the General Data Protection Regulation (GDPR), places users’ rights at the center and imposes strict requirements such as “privacy by design”, representing a form of ex-ante regulation that seeks to define the boundaries of technological expansion before risks materialize.

Despite these differences, the core obligation of platforms as data processors remains clear: they must follow the principle of “minimal disclosure by default”. In other words, platforms should minimize privacy risks unless users actively choose otherwise. However, in the ChatGPT incident, the platform failed to adequately address deficiencies in user notification and permission control.

First, when designing the “shareable link” feature, did the platform clearly inform users that such links might be indexed by search engines? Did it implement encryption measures or technical mechanisms to prevent crawling? If not, users might have given “consent” without being fully informed. Under the PIPL, the disclosure of sensitive personal information requires the user’s separate consent. Even for ordinary personal information, such as content that may contain business information, valid consent given with full knowledge of the consequences is a must.

For example, a lawyer handling an indigenous land dispute once entered strategic plans and other confidential information into ChatGPT. The lawyer had no intention of making this information public, yet the platform’s mechanism of a shareable link is generated as shareable by default led to its accidental exposure. In another example, a user consulted the AI about the psychological effects of psychedelic drugs—a topic that may involve sensitive personal information and therefore requires separate consent before disclosure. These examples reveal significant deficiencies in the platform’s ability to capture users’ genuine intent.

Second, by embedding the “public link” option within the ordinary “share conversation” function, the platform blurred the boundary between private sharing and public dissemination. Article 6 of the PIPL provides that personal information processing must be limited to the minimum scope necessary to achieve the intended purpose. Treating public disclosure as equivalent to ordinary sharing clearly exceeds that scope and violates the principle of data minimization. The platform also failed to clearly and prominently inform users of the risk that their data might become publicly accessible.

Overall, the design of ChatGPT’s shareable-link feature appears inconsistent with these requirements. Insufficient notification, blurred boundaries of functions, and inadequate risk prevention contributed to the unintended disclosure of user information, suggesting shortcomings in the platform’s data protection obligations.

3. Privacy Blind Spots in Platform Design

The exposure of ChatGPT conversations was not caused by hacking attacks but by deficiencies in internal product design and management. Similar incidents are not unprecedented; there have previously been reports that chat records from other AI systems were indexed by search engines.

At the root of the problem is the lack of careful consideration during product design regarding whether shared links can be publicly accessible across the internet. In other words, flaws in the platform’s default configuration directly lead to privacy exposure. For platforms, the cost of such oversight may ultimately far exceed the benefits gained from product experimentation. For users, a seemingly harmless act of sharing may cause sensitive data to circulate widely online.

More seriously, this type of “default disclosure” represents a systemic vulnerability: it does not depend on attackers and may not generate abnormal system logs, yet it effectively breaks through the boundary of user privacy.

4. Neither Platforms nor Users Should Rely on“Technological Neutrality”

The development of AI platforms must be grounded in the protection of user privacy. When designing and launching new features, platforms should clearly inform users through prominent prompts of the risks associated with public links and obtain valid consent from users. They should also impose strict default access restrictions on privacy-related data and implement mechanisms to promptly interrupt or limit abnormal batch access or automated crawling.

Users, for their part, should not blindly assume that technology is neutral. Sensitive data, such as ID numbers or client information, should not be entered into AI conversations whenever possible. When using sharing features, users should remain aware that the content may potentially become public. If a data leak is suspected, users should promptly preserve evidence and complain or appeal to the platform.

Ultimately, while platforms are obligated to safeguard the boundaries of user data, users should also remain alert to the hidden risks behind seemingly simple actions such as “sharing”.

Lawyer Contacts

86-21-52134918

youyunting@debund.com/yytbest@gmail.com

Disclaimer of Bridge IP Law Commentary

Short Link: